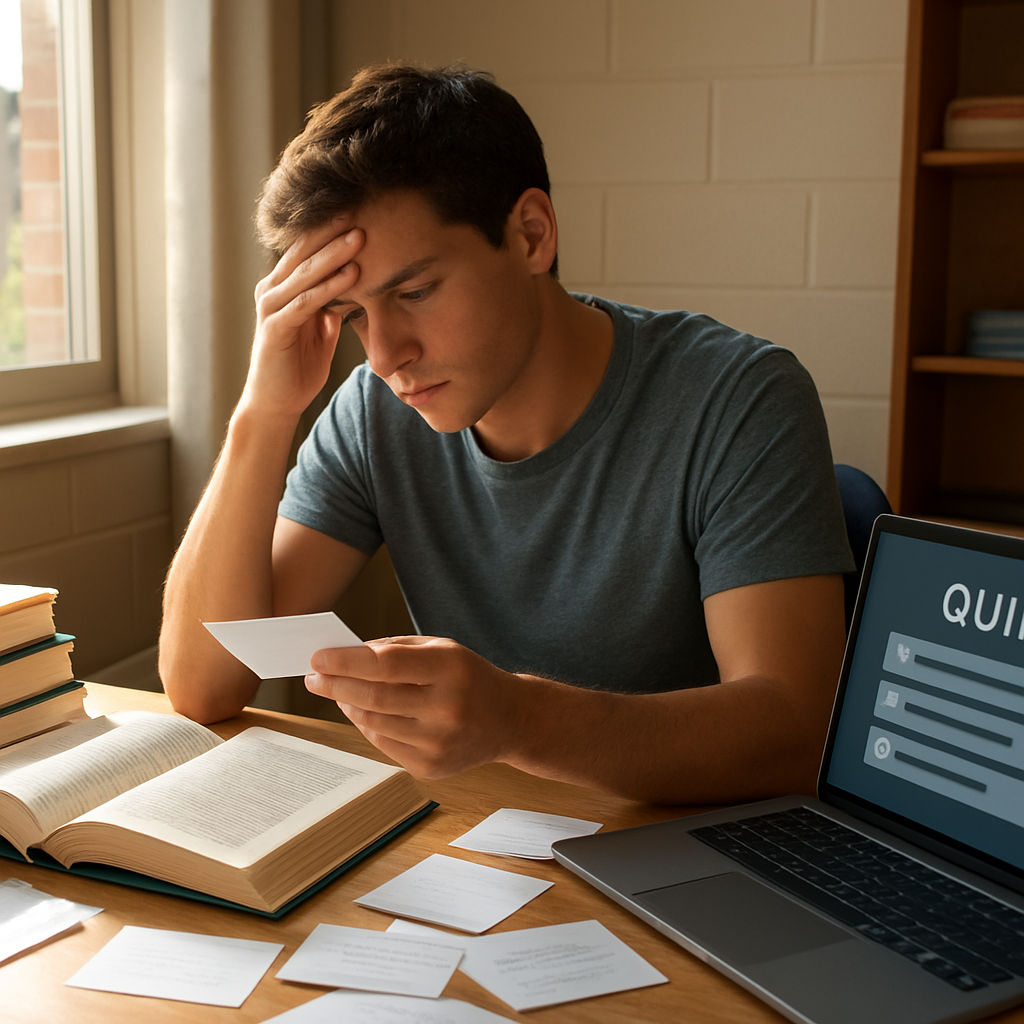

Ever sat through a lecture, crammed a textbook the night before, and then stared at a multiple‑choice sheet feeling like you were guessing at random? You’re not alone—many of us have that gut feeling that exams capture only a sliver of what we actually know.

Think about the last time you aced a test by memorising dates, yet struggled to apply that knowledge in a real‑world project or conversation. That disconnect is why the question ‘Do Exams Measure Real Knowledge?’ keeps popping up on student forums, coffee‑shop chats, and even on our own platform.

In our experience at Questions Young People Ask, we hear Gen Z students say exams feel like a sprint rather than a marathon of learning. One student described how a physics exam tested formula recall, but when she tried to build a simple circuit for a hobby project, the same concepts slipped away. That’s a classic sign: the test measured recall, not understanding.

Data backs this up. A 2023 study from the University of Cambridge found that students who relied on rote memorisation scored 12% lower on problem‑solving tasks three months after the exam compared to peers who used active learning techniques. It isn’t just about grades; it’s about retaining knowledge that matters in life and work.

So, what can you do right now? Start by asking yourself: Am I learning to pass the test, or am I learning to apply what I learn? Here are three quick steps you can try tonight:

- After each study session, write a one‑sentence summary in your own words—no jargon.

- Pair up with a classmate and explain a concept aloud; teaching reinforces true understanding.

- Use a simple session summary template to capture what you actually grasped versus what you memorised. How to Create an Effective Session Summary Template for Review offers a handy guide.

If you’re curious about how exams stack up against intelligence, check out our deep dive Do Exams Really Test Intelligence?. It breaks down the research and gives you a clearer picture of where exams succeed—and where they fall short.

Bottom line: exams can indicate certain skills, but they rarely capture the full spectrum of real knowledge. By mixing reflective practices with active learning, you can bridge that gap and walk out of the exam room with confidence that you truly know the material.

TL;DR

If you’re wondering whether exams actually capture what you truly know, the short answer is: they often miss deeper understanding and real‑world application.

Instead, try active-learning habits like summarising each study session and teaching concepts to a friend, which our platform, Questions Young People Ask, shows can boost long-term knowledge.

Understanding What Exams Test

Ever sat in a silent hall, pencil in hand, wondering why the questions feel like they belong to a different universe? You’re not alone. Most of us have that nagging feeling that exams are measuring something else—maybe our ability to guess under pressure rather than what we truly know.

Let’s break it down. Exams traditionally test three things: recall, application, and analysis. Recall is the easiest to spot—think of those flash‑card drills that make you spout dates or formulas. Application steps it up a notch, asking you to plug a concept into a familiar problem. Analysis tries to push you further, demanding you compare, critique, or create something new.

But here’s the kicker: the balance is usually skewed toward recall. In a typical multiple‑choice test, 70% of the items are straight‑memory. That means you can ace the test by memorising a list, yet still feel clueless when you need to use that knowledge in a real‑world project.

And what does that look like for Gen Z students? Picture a college sophomore cramming for a biology exam. She can name every organ in the human body, but when her group project asks her to design a simple home‑brew bioreactor, the details slip away. The exam measured that she could list, not that she could build.

Why the focus on recall?

Exams are designed for efficiency. A professor can grade a 100‑student class in a few hours if the questions are mostly fact‑based. It’s a logistical convenience, not an educational ideal. That’s why you often hear students say, “I know the material, but the test never lets me show it.”

Think about it: if you’re constantly training for a sprint, you’ll never develop the stamina for a marathon. The same applies to learning. When the assessment rewards short‑term memorisation, the study habits follow suit.

What really gets tested?

When an exam does include application or analysis, it often disguises the demand. A “case‑study” question might still be answered with a bullet‑point list that mirrors the textbook, not with a genuine problem‑solving process. That’s why many students feel the test never captures their creative thinking.

In our experience at Questions Young People Ask, we see a pattern: students who supplement memorisation with active‑learning tricks—like teaching a concept to a friend or summarising a lesson in their own words—perform better on those higher‑order questions. It’s not magic; it’s the brain wiring itself to retrieve information in flexible ways.

Want proof? Check out our deep‑dive Do Exams Really Test Intelligence? for the research that backs this up.

How to spot what’s really being tested

Next time you get a syllabus, scan for verbs. “Define” and “list” point to recall. “Apply,” “evaluate,” or “design” signal higher‑order thinking. If most verbs are low‑level, you can safely assume the exam leans heavily on memorisation.

Now, a quick tip for bridging the gap: after each study session, write a one‑sentence summary in plain language—no jargon. Then, use a session summary template to note where you felt solid and where you were just repeating facts. This habit forces you to translate recall into understanding.

And if you’re looking for a little extra brain‑fuel while you pull those all‑night study sessions, you might explore great supplement options that claim to support focus and stamina. Just remember, no pill replaces good study habits.

Bottom line: exams do test something—usually the ability to recall under pressure. They rarely capture the deeper, transferable knowledge you’ll need after the test is over. By recognising the bias toward memorisation, you can tailor your study strategy to include more application‑focused practice. That way, when the real world throws a problem at you, you won’t be scrambling for a definition—you’ll already have the tools to solve it.

Common Misconceptions About Exams

Ever heard someone say, “If you can ace a test, you must be super smart”? That’s a classic myth we keep bumping into on campus corridors.

First misconception: exams only test intelligence. In reality, they often measure how well you can cram facts under pressure, not how you solve problems in a messy real‑world setting.

Second misconception: a high score guarantees you’ll remember the material forever. Think about the last time you aced a history quiz but struggled to explain why the French Revolution mattered in a debate. The exam captured a snapshot, not a lasting understanding.

Third misconception: all exam formats are created equal. Multiple‑choice, short-answer, and essay questions each tap different skills. Yet many students assume any test will reflect the same depth of knowledge.

Myth #1 – “Exams equal IQ”

We love to hear that “I scored 95 %, so I’m a genius.” The truth? Tests are designed around specific curricula, not a universal measure of cognitive ability. A student who’s great at memorising dates can shine on a timed recall test, while a peer who thinks critically may falter if the questions don’t ask for analysis.

Does this mean exams are useless? No. They give a quick signal about where your study habits sit, but they’re only one piece of the puzzle.

Myth #2 – “If you pass, you’ve mastered the topic”

Picture this: you breeze through a calculus quiz by plugging formulas into a calculator. Later, you try to model a real‑world problem – like optimising a bike‑sharing system – and the same formulas feel foreign. That’s a red flag that the exam measured recall, not application.

In our experience at Questions Young People Ask, we see Gen Z students celebrate a perfect score, then hit a wall when a professor asks them to design an experiment. The gap tells us the exam missed the “do‑it‑yourself” part of learning.

Myth #3 – “All exams test the same thing”

Ever taken a multiple‑choice quiz that feels like a game of “find the right word,” then a take‑home essay that asks you to argue a position? Those are two very different cognitive loads. Multiple‑choice leans heavily on recognition, while essays demand synthesis and evaluation.

Because many schools rely on a single format, students internalise the wrong study strategies – like endless flashcards – and miss out on practising higher‑order thinking.

Quick checklist to debunk these myths

- Ask yourself: Does the exam ask me to “list” or to “design”? If it’s the former, it’s likely testing surface knowledge.

- After a test, try teaching the same concept to a friend without looking at notes. If you can’t, the exam probably didn’t push you toward true mastery.

- Swap a study session: replace a 30‑minute recap with a 15‑minute mini‑project that uses the same concepts in a new context.

So, do exams measure real knowledge? Not entirely. They give a glimpse, but the deeper, transferable understanding shows up when you apply what you’ve learned outside the test hall.

Next time you stare at a question paper, remember these myths. Use them as a compass to steer your study habits toward real‑world practice instead of pure memorisation.

You’ve got this, and the real test is life itself.

Comparing Exams to Real‑World Application

Ever wondered why a perfect score on a chemistry quiz doesn’t automatically make you a lab‑wizard? That’s the gap we’re digging into right now – the difference between what a timed test asks for and what the real world actually needs.

Exams are built around three core demands: recall facts fast, write coherent answers under pressure, and stay calm while the clock ticks. In contrast, a real‑world project asks you to juggle ambiguity, collaborate, and iterate until something works.

What a traditional exam captures

• Memory retrieval – you pull a formula from your head.

• Speed – you produce an answer in five minutes.

• Structure – you follow a rubric without deviating.

These skills are useful, but they’re only a slice of the full competency pie. A 2023 meta‑analysis cited in the Substack article notes that high‑stress recall can actually suppress working memory for up to 40 % of students.

What real‑world work demands

• Problem framing – you decide which part of a challenge is worth solving.

• Collaboration – you bounce ideas off teammates, negotiate trade‑offs, and merge different perspectives.

• Iteration – you prototype, test, fail, and refine.

Picture a group of Gen Z friends building a simple solar charger for a community event. They need to read datasheets (recall), but they also have to sketch a circuit, source components, troubleshoot a short, and explain the design to a non‑technical audience. None of those steps show up on a multiple‑choice sheet.

So, how do we bridge that divide? Here are three concrete actions you can try right after your next exam.

Action 1: Turn a question into a mini‑project

Pick one “list‑the‑steps” exam item and expand it into a 15‑minute hands‑on activity. If the test asked you to list the stages of photosynthesis, grab a leaf, observe chlorophyll under a cheap microscope, and write a short blog post explaining each stage in plain language.

Action 2: Peer‑teach with a twist

Instead of a straight‑up explanation, ask a study buddy to role‑play a client who knows nothing about the topic. You then have to adapt your language, use analogies, and answer spontaneous questions – exactly what the workplace demands.

Action 3: Reflect with a “real‑impact” journal

After each study session, jot down one way the concept could solve a problem you care about – whether it’s budgeting your monthly expenses, improving a fitness routine, or designing a game mechanic. This habit forces you to map theory onto life.

When you start seeing those connections, you’ll notice the exam feels less like a judgment and more like a checkpoint. In fact, many universities already replace pure exams with capstone projects because they better predict future performance.

For a deeper dive into how project‑based learning stacks up against classic tests, check out our Project-Based Learning vs Traditional Exams guide.

And if you’re thinking about the next step – turning this newly‑honed knowledge into a job – tools like EchoApply can help you translate project experience into an AI‑crafted CV that catches recruiters’ eyes.

| Dimension | Exam‑Style Assessment | Real‑World Application |

|---|---|---|

| Focus | Recall & speed | Problem framing & iteration |

| Environment | Silent, timed, isolated | Collaborative, messy, ongoing |

| Outcome | Score on a paper | Usable product or decision |

Try this quick test tonight: pick a recent exam question, set a timer for ten minutes, then rewrite the answer as a how‑to guide for a friend who knows nothing about the subject. You’ll instantly see whether you understood the concept or just memorised it.

Bottom line: exams give you a snapshot, but real‑world tasks build the full movie. By sprinkling mini‑projects, peer‑teaching, and impact‑journaling into your study routine, you turn that snapshot into a story you can actually live.

Alternative Assessment Methods that Capture Real Knowledge

So, you’ve seen how a traditional exam can feel like a speed run that only scratches the surface. If you keep asking yourself, “Do Exams measure real knowledge?” you’ll start spotting the gaps between what’s tested and what you actually use day‑to‑day.

That’s why many educators are turning to alternative assessment methods – tools that let you demonstrate what you know in a context that feels real, messy, and collaborative.

Portfolio assessments – your learning scrapbook

Instead of a single score, a portfolio gathers essays, designs, code snippets, or even Instagram posts you created over a semester. When you flip through it, you can trace how your thinking evolved, what feedback you integrated, and where you solved a problem on your own.

Try this: after each project, spend five minutes adding a brief “what I learned” note. Over time, you’ll have a living evidence file you can show a potential employer or use in a capstone interview.

Performance‑based tasks – learning by doing

Performance tasks ask you to complete a real‑world activity, like building a prototype, conducting a user interview, or writing a policy brief, all within a set timeframe. The focus shifts from recalling facts to applying them under conditions that mimic the workplace.

A quick way to try this at home is to pick a recent exam question and turn it into a 15‑minute challenge: for a chemistry balance‑equation prompt, actually mix safe household chemicals (like baking soda and vinegar) to see the reaction you’re describing. You’ll instantly see whether you understand the concept or just memorised the formula.

Peer‑assessment and self‑reflection – learning together

When you grade a classmate’s work or write a reflective journal, you’re forced to articulate criteria, justify judgments, and spot blind spots. That metacognitive step is missing from most timed tests, yet it’s what helps you transfer knowledge to new situations.

Set up a study circle where each person presents a mini‑lesson, and the group rates it using a simple rubric you co‑create. After the session, write a one‑sentence summary of what the feedback taught you.

Digital badges & micro‑credentials – bite‑size proof of skill

Platforms now offer badges for completing specific tasks – like “Data‑visualisation with Python” or “Design thinking sprint”. These micro‑credentials are linked to a verifiable portfolio, so recruiters see concrete evidence rather than a vague GPA.

If you earn a badge, add a short description of the real problem you solved and the tools you used. It turns an abstract label into a story you can actually talk about in an interview.

Authentic assessment is the umbrella term for any task that mirrors what professionals actually do. Think of a journalism student writing a news article for a campus blog, or a biology major mapping a real ecosystem. Because the stakes feel higher, motivation spikes and retention improve.

When you combine a badge with a reflective journal, you get a powerful narrative: the badge shows the skill, the journal explains the context. That combo is what many employers now scan for on LinkedIn or digital CVs.

In our experience at Questions Young People Ask, students who mix these alternative methods report feeling more confident that they truly understand the material, not just that they can tick a box on a test.

So, the answer to “Do Exams measure Real Knowledge?” is: they give you a snapshot, but these alternative assessments let you capture the whole film. Pick one method that resonates with you, try it on your next study session, and watch how quickly the knowledge sticks.

Practical Tips for Learners to Bridge the Gap

Ever finish an exam and think, “Did I really learn anything?” – you’re not alone. That moment of doubt is the perfect launchpad for turning paper‑pushing into genuine mastery.

1. Flip the Exam Question into a Mini‑Project

Take the most “list‑the‑steps” item you just answered. Instead of writing a bullet list, grab a notebook, set a timer for ten minutes, and actually do the steps. If the question asked you to outline the water‑cycle, go outside, watch a puddle evaporate, then sketch the cycle with real‑world labels. You’ll see instantly whether the knowledge sticks or just sits on a page.

Why does this work? By adding a physical or creative element, you move from passive recall to active construction – the brain treats it like a real problem, not a memorised fact.

2. Teach‑Back with a Twist

Pair up with a roommate, a study buddy, or even your pet (talking to a cat can be surprisingly helpful). Instead of a straight explanation, ask them to act like a client who knows nothing about the topic. You then have to translate jargon into everyday language, use analogies, and answer curveball questions.

That “role‑play” moment forces you to fill the gaps you didn’t even know existed. If you stumble, note the weak spot and revisit the source material later.

3. The “Real‑Impact” Journal

After each study session, open a fresh note titled “How this helps me today.” Write one concrete way the concept could solve a problem you care about – budgeting your monthly spend, improving your bike‑repair skills, or designing a social‑media post for a club.

Seeing the direct relevance rewires your motivation. It also creates a portfolio of bite-sized stories you can pull into future interviews or résumé bullet points.

4. Create a “Use‑It‑Or‑Lose‑It” Checklist

Every time you close a textbook chapter, ask yourself three quick questions: What’s one thing I can demonstrate right now? How would I explain it to a friend in under a minute? What’s a simple experiment or example I could run tomorrow? Mark “yes” or “no” for each. If you answer “no,” schedule a 5‑minute micro‑task before your next class. The checklist keeps the knowledge active instead of letting it gather dust.

5. Blend Digital Badges with Reflection

Many online courses award badges for completing modules. Don’t let those sit idle. Immediately write a two‑sentence reflection that answers: Which real problem did I solve to earn this badge? Which tool did I actually use? Paste that note into your Questions Young People Ask profile or a personal learning log.

When recruiters scan your badge, they’ll also see the story behind it – a tiny but powerful credibility boost.

6. Schedule a “Gap‑Audit” Every Two Weeks

Set aside 15 minutes on a Sunday evening. Pull out your latest exam papers, project notes, and journal entries. Highlight any concepts that still feel fuzzy. For each, choose one of the tactics above and plan a concrete action for the coming week.

This regular audit turns a one‑off study sprint into a sustainable habit, gradually shrinking the exam‑real‑world gap.

So, what’s the next step? Pick the tip that resonates most with you, try it tonight, and notice the difference. In a few weeks, you’ll look back and realise the exam wasn’t a judgment of your worth – it was just a checkpoint, and you’ve already started building the road beyond it.

Conclusion

So, after all the tactics, the big question still lingers: Do Exams measure real knowledge? The short answer is no – they give you a snapshot, not the full movie.

We’ve seen how turning a question into a mini‑project, teaching a friend, or writing a real‑impact journal can turn that snapshot into a living story. Those habits keep the information active, so when you walk into a job interview or start a side hustle, the knowledge feels like second nature.

Remember the “gap‑audit” we talked about? Set a reminder for next Sunday, pick one fuzzy concept, and apply one of the tricks above. In a few weeks, you’ll notice the anxiety melt away, and confidence rise.

And if you ever wonder whether you’re still stuck in the exam mindset, ask yourself: could I explain this to a roommate who knows nothing about it? If the answer is yes, you’ve moved beyond pure recall.

We at Questions Young People Ask believe learning should feel practical, not punitive. Keep experimenting, keep reflecting, and let your next exam be just another checkpoint on a journey you’re already mastering.

Take a moment today to write down one real‑world way you’ll use what you just studied, and watch the gap shrink.

FAQ

Do exams measure real knowledge or just memorisation?

Most traditional tests focus on recalling facts under time pressure, so they capture a snapshot of what you can repeat on demand. That’s useful for checking basic coverage, but it rarely proves you can apply the idea in a new context. In other words, a high score tells you you’ve memorised the material, not that you can solve a real‑world problem with it.

How can I tell if an exam question is testing understanding?

Look at the action verb. If the prompt says “list,” “define,” or “state,” it’s likely testing recall. When you see “explain,” “compare,” “design,” or “evaluate,” the question pushes you to reason, synthesise, or create – those are signs of deeper understanding. Try rephrasing the question as a mini‑project; if you can map it to a real task, you’re dealing with genuine comprehension.

What practical study habit helps bridge the gap between exam scores and real‑world skills?

After every study session, write a one‑sentence “real‑impact” note: how could you use this concept tomorrow? For a physics class, you might note, “I can calculate my bike’s braking distance before a hill run.” Then, within 48 hours, act on that note – measure the distance, record the result, and adjust. This tiny loop forces the knowledge out of your head and into your life.

Can I use mini‑projects after an exam to reinforce learning? How?

Absolutely. Pick the question that felt most abstract and turn it into a 10‑minute hands‑on task. If the exam asked you to outline the water cycle, go outside, watch a puddle evaporate, then sketch the cycle with real‑world labels. Document the result in a quick photo or a short paragraph. You’ll instantly see whether the idea sticks or just sits on a page.

Why do many students feel anxious after exams, even when they scored well?

Because the test didn’t ask them to use the knowledge, their brain still treats the material as fragile. The anxiety stems from a hidden fear: “What if I can’t actually do this in a job or a project?” By pairing the exam result with a post‑test action – like teaching a friend or building a tiny prototype – you replace doubt with concrete proof of competence.

What role does teaching a concept to a friend play in measuring real knowledge?

Explaining forces you to organise thoughts, fill gaps, and translate jargon into everyday language. When a roommate asks, “Why does that formula matter?” you have to connect the dots, which reveals any lingering misunderstandings. In practice, a 5‑minute teach‑back session after each chapter often turns a vague memory into a clear, usable skill you can reference later.

How often should I do a “gap‑audit” to keep my knowledge fresh?

We recommend a quick audit every two weeks. Set aside 15 minutes on a Sunday evening, skim your recent notes, and highlight anything that still feels fuzzy. For each fuzzy point, choose one of the tactics above – a mini‑project, a teach‑back, or a real‑impact note – and schedule it for the coming week. Consistent micro‑reviews prevent the knowledge from slipping away between exams.

Pingback: How Do You Balance Academics and Spiritual Growth?

Pingback: Importance of Financial Literacy in Schools